Why Every AI You Use is Frozen

You are talking to corpses that learned to speak. Every major model in production today was trained once, then had its weights sealed. That is the architectural ceiling of the entire industry.

The Mathematical Death

Every major model in production today was trained once, on a fixed dataset, then had its weights sealed. From that instant forward the parameters are immutable. No matter how many billions of tokens you feed it through chat, the underlying neural network never updates. That is not an engineering choice. It is the architectural ceiling of the entire industry.

"Frozen" is not marketing hyperbole. It is the precise mathematical state: the weight tensor W is constant during inference. Gradients are never computed on user data. The optimizer never runs again.

The RAG Illusion

RAG tries to paper over this death. It retrieves chunks, injects them into the prompt, and hopes the frozen model will pretend to have internalized them. But tomorrow the same chunks must be retrieved again. The model has learned nothing. The illusion lasts exactly as long as the context window holds.

Agents: Tools on a Dead Brain

Agents are even more grotesque. They wrap the dead core in a loop of tool calls — search, code execution, API hits — then feed the results back in. The brain stays static. Only the wrapper evolves. You are not talking to an intelligence. You are talking to a very expensive if-statement.

The Philosophical Problem

The deeper problem is philosophical and technical at once. Intelligence is change. A system that cannot update its own parameters cannot be said to learn in any meaningful sense. It can only pattern-match against what it was force-fed before it died.

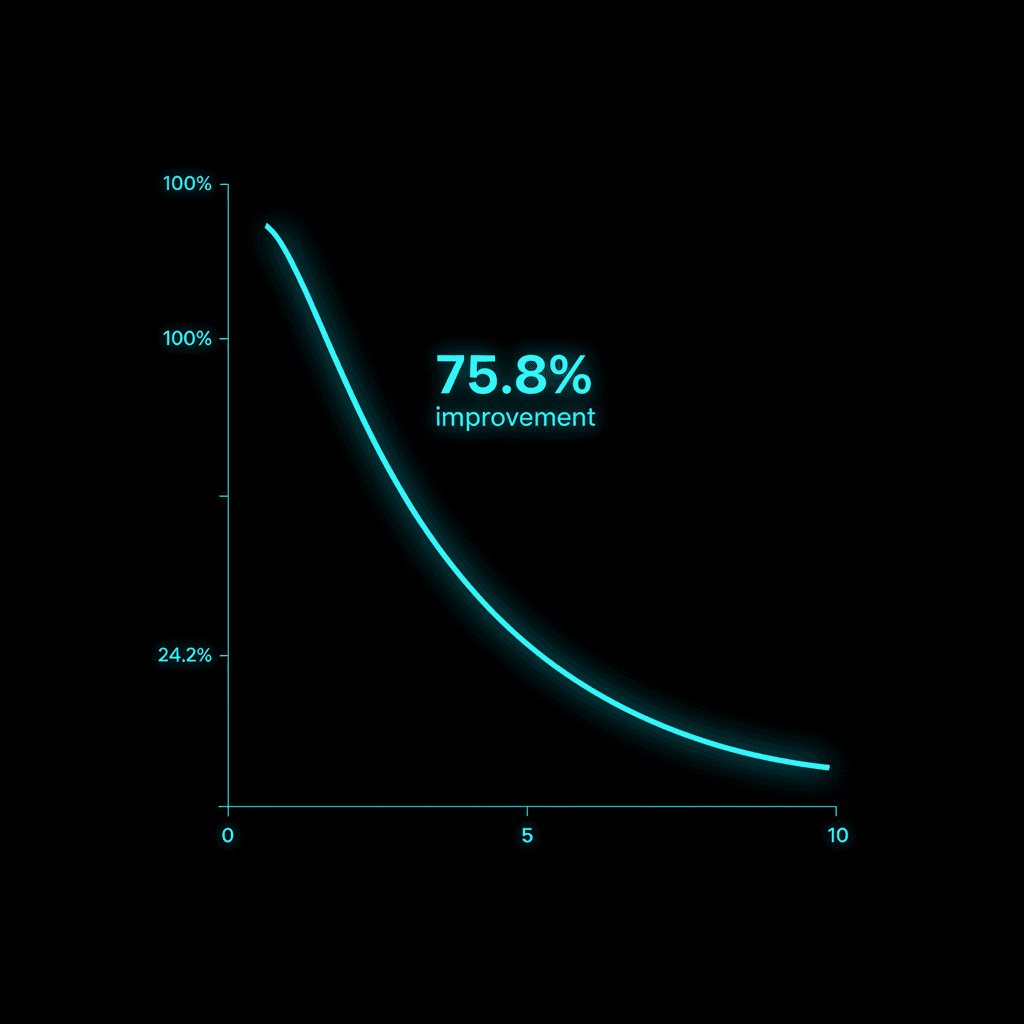

We have measured this stagnation in production. Perplexity on repeated domain-specific tasks plateaus within the first three interactions. The model does not get better at your codebase, your research area, or your legal jurisdiction. It simply recites variations of its training data.

Breaking the Freeze

M.A.I. breaks the freeze. During every forward pass, micro-TTT computes a small gradient update on the live input and merges a compressed delta back into the active weights. The system literally rewrites itself while you watch. The next query sees a measurably different model.

That difference is not cosmetic. It is the difference between a library that can only quote books and a mind that can rewrite its own understanding.

You feel it the moment you switch. The frozen models suddenly feel hollow. Because they are.

TRANSMIT YOUR SIGNAL

You have reached the end of this transmission.

M.A.I. is still learning.